MIDI notes

Communicating MIDI

Serial MIDI

The classic, seen on thirty+ years of devices.

tl;dr: a serial port at 31250 baud, used in one direction, wired to power an optocoupler's LED. This is pretty smart and also has some footnotes.

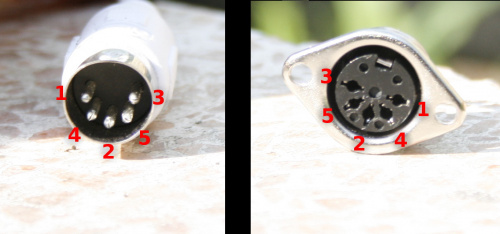

Plug and pinout

The plug is the 180-degree 5-pin DIN variant.

(note that some pinout charts use their own numbering, so pay attention)

- 1 NC

- 2 Shield (devices typically disconnect this on the receiving side)

- 3 NC

- 4 Source

- 5 Sink

Each device connection (pin 4+5) is a ~5mA current loop (rather than voltage-referenced),

so being able to drive ~5mA comfortably much is more important than precise voltage.

...and (arguably because) in most devices you effectively drive the LED part of an optocoupler, so you mostly just need to add a reasonable resistor for the voltage you have (and the max current your output pin can sink, which these days is rarely limiting).

With standard MIDI, pins 1 and 3 are unconnected in the sockets on both ends.

So MIDI cables can get away with having only 3 wires, but often have 5. There are non-standard devices that do use the extra pins.

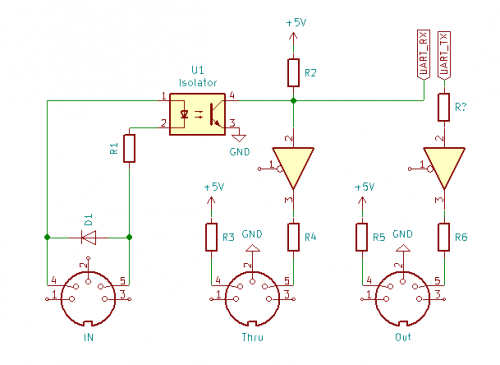

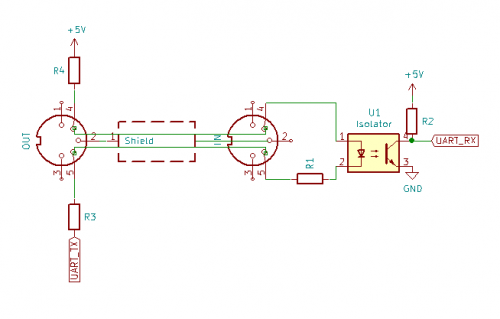

In the schematic on the right:

- D1 is used to protect the optocouple from reverse polarity, but also from cable effects. Most any fast-switching diode will do.

- DIY could omit it for quick tests, but arguably there more than anywhere else this protection is a good idea

- R1 is a current-limiting resistor for the optocoupler's LED.

- frequently 220 Ohm but this doesn't matter very precisely

- R2 is a current limiter and pullup(verify), and is often a higher value (0.5k .. 10kOhm), maybe check your optocouple specs

- The triangles indicate some sort of current buffer

- they're in the original MIDI specs because various output pins cannot drive 5mA directly (and in those specs it's two triangles, because two inversions, e.g. on a single 74LS14 hex inverter IC was a simple and cheap solution)

- These days you're probably using a microcontroller, which tend to have buffers on each pin that can drive on the order of 30mA, so you could omit this

- If you want to consider protection and/or ease of repair:

- maybe have that IC be something cheap and put it in an IC socket, or

- maybe put a transistor (with current limiting resistor on its base (here R4, R6) to limit the current through that IC.

- cable shield should be connected only on the source side (avoids ground loops)

- This is handled within devices, mostly by not connecting the DIN barrel/pin 2 on IN sockets

- this way the cable doesn't have a direction - it just carries that shield

How do multiple decivices work?

MIDI is one-directional.

In a chain, earlier device can talk (only) to any later devices, because that's the direction messages go.

This means the order devices are hooked up in can matter.

You'ld generally

- put things that only send things early in the chain,

- put things that only produce sounds at the end,

- slightly confuse yourself about things that do both

- which includes PC DAWs

MIDI hardware may have one, two, or three plugs (which they have varies with the kind of device)

- MIDI IN - usually means it can play sounds in response to messages

- MIDI THRU - a copy of of the IN plug (electronically buffered, but otherwise direct)

- MIDI OUT - means it can be the origin of notes/messages

if you have too many devices

Note that THRU connections in series will slowly add timing errors (due to optocouple time). On a scale of what most people have you are unlikely to ever notice this, but if you have a house full of devices in a single long chain, you may notice some latency from start to end. You can reduce it with options like adding splitters early (building a tree instead of one long chain). Or, if it's only really going into a PC, get some more MIDI ins so you can have multiple shorter strings.

On 3.5mm TRS

Slimmer devices started using 3.5mm (and sometimes 2.5mm) TRS plugs on the device, with adapters to 5-pin DIN.

These weren't all wired the same way, so the MMA (MIDI Manufacturers Association) decided to set a standard.

...that included both of them, so it just gave them names:

Type A (verify)

- T - sink

- R - source

- S - ground

Type B

- T - source

- R - sink

- S - ground

So instead for it not working half the time, it's now labeled,

I've seen DIN-to-TRS boxes with a switch to support both, which is very simple to build (mainly a DPDT switch).

https://www.midi.org/midi-articles/trs-specification-adopted-and-released

MIDI and power

MIDI does not carry DC power. (also helps it avoid having a shared reference/ground, which helps it avoid ground loop issues)

Beyond the specs, some devices do something like this anyway.

In particular portable devices that need a little power, which is great, except there is no standard to

- polarity

- voltage (a bunch of 5V but I've seen 12V)

- pins (many seem to abuse pins 1 and 3; others some seem to use 1 and 2, or 3 and 2, even though using shield is arguably not the best great idea)

At the same time it is usually safe enough, in that even if you manage to plug the powering end of that directly into another device,

pin 1 and 3 are rarely connected internally (as they have no function).

That said, it might conflict with other non-standard uses on these pins.

(If you want to add DC supply in your design, consider the slightly safer option of using a 7-pin DIN socket, in that you won't be able to plug the with-power 7-pin plug into into any other device's 5-pin MIDI plug)

USB-MIDI

USB-MIDI means "MIDI was put into the USB specs, so USB devices can easily speak appear as MIDI devices to your programs".

Its protocol is largely modelled on serial MIDI packets.

- ...though it adds a few things, like a field called Cable Number, mostly for ease of routing(verify).

Upsides

- The message pileup in serial MIDI is basically absent here

- because the transfer rate of USB is much higher, and devices are connected individually anyway

- (though note it's not a realtime bus, so there are still some small footnotes to this).

- Being part of the USB spec makes it almost easy for DIY projects to speak USB-MIDI as to speak serial MIDI

- e.g. the Arduino Leonardo / Pro Micro can basically talk USB-MIDI directly

Arguables / limitations

- Distinct devices connected via USB-MIDI appear as distinct controllers with one MIDI device on them

- which allows better separation than channels alone would give

- but for the same reason there is more focus on routing within the host (usually a PC) than before.

- DAWs tend to help you, your own MIDI programs may need a little more care.

- you may not be able to connect devices together directly anymore, without a PC

- though there are boxes that do this, and various DIY [1]

Bluetooth

Expect at least 3ms to 6ms of added latency(verify) and possibly more like 10 or 20ms, depending on bluetooth version and a few other practical details.

If it stays on the low end of that, that's good enough for many uses.

There are various DIY projects using Bluetooth BLE modules

RTP-MIDI

RTP-MIDI / rtpMIDI (a.k.a. AppleMIDI, but it's an open and unlicensed standard)

is MIDI over RTP, with RTP typically on top of UDP(verify). (so has details like RTP doing automatic retransmission)

So this usually amounts to MIDI over Ethernet or WiFi.

On LAN, latency should be about as good as serial MIDI,

and it can sometimes be a practical way to link many things together over a somewhat larger distance.

On WiFi you generally can't guarantee latency to always stays under an acceptable level (there is significant latency jitter), (depending a bit on your environment, other users, etc), so best case it just works perfectly, worst case you can't even fix it.

Over the internet you'll have at least your broadband's latency, which you can assume is 10ms, on both sides, even to your neighbor's house.

Mobile is more variable, but generally a little worse.

Note that latency jitter doesn't directly mean inaccuracy, see e.g. https://tools.ietf.org/html/rfc4696#section-6

but it does imply some tradeoffs and bounds.

OSX since Tiger (10.4) has RTP-MIDI, it's that thing called "Network" in the MIDI setup.

It's also in iOS since 4.2 (so ~2010, iPhones around 3G)

Beyond that, the limited need,

and the existence of things like it (like OSC),

means it's not in widespread use,

though there are implementations on many platforms,

and a bunch of interesting DIY hardware it's being used on.

To get it elsewhere, see e.g. wikipedia's implementation list.

https://tools.ietf.org/html/rfc4696

Virtual MIDI devices

MIDI often means hardware way in or out of the computer - application to device, or device to application.

This does not cover the case of application to application, e.g. between DAWs, or from midi-generating app to DAW.

Virtual MIDI devices are basically something you can open as an in and out port, that does nothing more than repeat what it gets.

That way, one app app can write to it, and another can read from it, which works out as a virtual midi cable.

On windows, install something like loopMIDI

On OSX, adding a 'Virtual MIDI Cable' is what you want.

On linux,

- on ALSA, look at snd-seq-dummy

Byte protocol

Some of this focuses on the serial nature of the original serial implementation. Things work a little differently in other variants, like USB-MIDI.

Note that only the first byte of a message always has its highest bit set, and the rest of the message has it cleared.

This makes it very easy for the serial variant to

- ignore messages we don't know or care about

- quickly resync to a new message, if we happen to get confused somewhere, connect to the bus in the middle of a message, etc.

One exception in the literal sense is SysEx, which should not contain any bytes with their highest bit set, but ends with one.

But the point of this is much the same - we can ignore things we don't understand, and it pretty clearly marks the start and end of a SysEx (which is good because SysEx isn't a fixed size, like most other messages).

Message types are conceptually subdivided like:

- Channel Message

- Channel Voice messages

- Note On, Note Off, Aftertouch

- also PitchBend, Control Change, Program Change (because it's related to voices)

- Channel Mode messages - say what the voices should do in response to voice messages (see more below)

- Channel Voice messages

- ...note that this is similar to ControlChange (which fall under Channel Voice)

- System Message

- System Real Time messages - meant for synchronization between MIDI devices (that support this, relatively few(verify)).

- System Common messages - meant for all receivers, regardless of channel. Also used for some external hardware like tape (not seen very often).

- System Exclusive (a.k.a. SysEx) messages

- sent to a specific Manufacturer's ID

- mostly to allow manufacturer-specific communication (though there are some predefined universal ones)

- Also used to implement some extensions, like MIDI Machine Control

- Due to variable-length behaviour, these are terminated (so that implementations can properly ignore them)

Channel voice

The most central in the music-making sense:

- NoteOn

- 1001cccc 0nnnnnnn 0vvvvvvv

- where c refers to channel, n to note, and v t velocity

- note is C0 to G10 (8Hz..12kHz) in semitone steps, so e.g. middle C is value 60

- e.g. an 88-key keyboard will use ~three quarters of the full 0..127 range

- velocity of 64 is often used when there is no touch sensitivity

- NoteOff

- 1000cccc 0nnnnnnn 0vvvvvvv

- (note that NoteOn with a velocity of 0 is basically equivalent to a note off message)

- PitchBend (you'ld almost expect this to be a CC, not special cased)

- 1110cccc 0VVVVVVV 0VVVVVVV

- were V is an unsigned 14-bit integer value (0..16384) (lowest 7 bits are sent first)

- the halfway value 8192 means no pitch bend. Things will often have a small intentional dead zone, and/or limited resolution, to avoid slight out-of-tune-ness

- the receiving side can choose to make that full range correspond to larger or smaller ranges. It's fairly common to default to 2 semitones in each direction

- the sending side could also choose to bend less, just by using less of that fairly large integer range (8k values even used for an octave of bending is still well under a cent of resolution. Though note that a lot of physical sensors won't have that precision)

And perhaps

- Aftertouch is expression to playing notes beyond the initial velocity

- ...often a variation of force happening after a NoteOn

- few instruments send it, few synths instruments listen to it, but where it exists certainly helps expression, and tends to be fairly intuitive.

- Also, in DAWs you could often map it to... anything, really -including some parameter of the DAW instrument that itself ignores the aftertouch MIDI messages

- On keyboards that send it

- it may be a force sensor that only sends anything when you lean harder into keys, so it isn't really related to velocity.

- also consider it is frequently a per-channel thing (e.g. there's just one force sensor strip below all keys) rather than per key ('polyphonic')

- how you can use it varies.

- e.g. if it's a per-channel lean-heavily, it's probably only useful for some manual vibrato-like effect

- E.g. if it's a lighter per-key pressure thing, it might be usable as a 'how softly you are letting go'

- MIDI-message-wise

- If sensed per physical key and sent for that key, it is sent in Polyphonic Key Pressure (1010cccc 0nnnnnnn 0vvvvvvv)

- If sensed overall and sent per channel, it is sent in Channel Pressure (1101cccc 0vvvvvvv)

- ControlChange is meant for when the state of a slider, knob, footswitch, pedal, etc. changes

- 1011cccc 0CCCCCCC 0vvvvvvv

- where C is controller number, and v is a value

- see notes below

- ProgramChange

- 1100cccc 0nnnnnnn

- change the instrument patch, basically the type of instrument

- there is a list in General MIDI. Other synths tend to have their own

- this idea is extended by ControlChange 0, though it's less stanardized how

- on some hardware (e.g. drum machines) it may have other functions

CCs

Control Change are intended as "send when a physical knob changes".

Mostly intended to alter parameters of a synth, on a particular channel.

The controller number defines what to change, and a value defines what value in a range it's changed to.

Many of the controller numbers have predefined meaning, and most synths listen to just a few of those.

There are also 'free to use' CCs, probably a little more useful, also useful for mapping knobs to arbitrary DAW functions with less worry about CC conflicts on a channel.

The message:

- 1011cccc 0CCCCCCC 0vvvvvvv

- where C is controller number, and v is a value

Controller numbers have a bunch of semi-standards based on CCs (some of which conflict).

A quick overview:

- 120 through 127 have functions like reset, mute, local control, omni on/off, poly on/off - and are considered channel mode messages, see below

- Most of 0 through 101ish change how a synth behaves on a specific channel

- some for specific controller types controller types (breath, foot)

- many to specific synth parameters (portamento, soft, volume, balance, attack, sustain, release, brightness, detune, channel volume, other effect amount)

- ...except for pitch bend - which has its own message (see above)

- 6,38, 96,97, 98,99, 100, and 101 are reserved for RPN and NRPN

- 3, 9, 14 through 31, 85 through 90, 102 through 119 are general-purpose or undefined, so you can just use them, assuming other devices you have haven't done the same

RPN and NRPN

If you want a bunch of arbitrary control, you might want to find some CCs, probably skipping the ones that your devices/VSTs don't already listen to.

There are at most three dozen general-purpose or undefined CC numbers you could get away with using for arbitrary mapping, and you still have to hope other devices aren't secretly using them, so you may run out quickly.

RPN and NRPN are a simple protocol built on top of just CC messages (6,38, 96,97, 98,99, 100,101), that sets a lot more distinct parameters.

It also lets devices support receiving 14-bit or 7-bit values (CCs are just 7 bit).

How to send

Setting (N)RPN values is done with specific CC sequences, and RPN and NRPN work similarly to each other:

- You use two CCs to control what thing to set

- NRPNs are sent via CC99 (NRPN Most Significant Byte) and CC98 (NRPN Least Significant Byte)

- RPNs are sent via CC101 (RPN Most Significant Byte) and CC100 (RPN Least Significant Byte)

- And another CC to say what value to set it to

In that sequence, it is the CC you start with that controls whether it's an RPN or an NRPN message, and which parameters are being selected.

This means

- receivers keep a memory of 'most recent thing selected to be altered'.

- if you want to vary just one CC parameter, you could skip the CC101+CC100 / CC99+CC98 pairs, and just keep sending CC6(, CC38, CC96, CC97)

- ...but when you're not the only one sending these messages, it can lead to some confusing alterations later.

This is part of why why there is a 'reset what we selected to nothing (127,127), and it is considered good style to use.

Example, setting coarse tuning RPN(0,2) to A440 (works out as value 64) (verify)

CC101:0, CC100:2, CC6:64,

Or in byte format for channel 1

B0 65 00 B0 64 02 B0 06 65

A RPN reset is

CC101:127, CC100:127

or in byte format (for channel 1):

B0 65 7F B0 64 7F

What parameters there are

- There are only a few RPN parameters ("Registered Parameter Numbers")

- Apparently it started with just

- (0,0): pitch bend range

- (0,1): fine tuning

- (0,2): coarse tuning

- (127,127): RPN null / reset

- And was apparently extended with

- (0,3): Tuning Program Select

- (0,4): Tuning Bank Select

- And also:

- (0,5) Modulation Depth Range (from GM2 specs)

- (0,6) MPE Configurarion Message (from MPE specs)

- (0x3D, 0 through 8): Three Dimensional Controllers

- NRPNs are more of an open set, ("Non-Registered Parameter Numbers")

- You can still get NRPNs to conflict, but there's a lot more room to play with.

- In theory it allows for 16384 (214) distinct new parameters.

- In practice, it seems

https://www.midi.org/specifications-old/item/table-3-control-change-messages-data-bytes-2

http://www.philrees.co.uk/nrpnq.htm

Channel mode

CC messages with controller numbers 120 .. 127 are interpreted as Channel Mode Messages.

Specifically:

- 120: All Sound Off

- 121: Reset All Controllers

- 122: Local Control

- basically, local=off says "you, a keyboard+synth, should send notes to MIDI out instead of playing yourself (also still plays what it gets)" (verify)

- 123: All Notes Off - useful for sequencers, particularly in the context of omni and poly

- 124: Omni Off

- 125: Omni On

- 126: Mono (note: has a parameter)

- 127: Poly

Omni=on means messages received from all channels are played.

Mono means a new NoteOn should end the previous note

- You may want this for portamento/glissando

- and makes sense for some instruments, like guitar controllers

More specifically,

- Mode 1 (Omni On, Poly) - from all channels

- Mode 2 (Omni On, Mono) - from all channels, control one voice

- Mode 3 (Omni Off, Poly) - from specific channel, to all voices

- Mode 4 (Omni Off, Mono) - from specific channel, to specific voices

Some controllers and DAWs have a 'panic' button used for "argh I have no idea why you are still making sound (probably a missed noteoff?), please stop it".

- This sends 123 and/or 120.

- And sometimes also NoteOff for all notes, in case a sound producer doesn't understand 123 or 120.

System Messages

All 1111....

Divided into

- System Common Messages (1111000 through 11110111)

- SysEx (11110000 (data) 11110111)

- System Real-Time Messages (1111000 through 11111111)

- all single-byte, no data

There's time/song/tuning stuff is in both these sections, so there's no huge distinction

except some scheduling detail (e.g. real-time messages may be sent during SysEx).

SysEx is used for a lot of stuff that fits nowhere else

- e.g. device-specific interaction stuff (usually in the manual) that doesn't suit CCs

- some parameter upload stuff (e.g. DX7 SysEx)

MIDI monitors may report all of this as SysEx, just because most of it's special-cased control stuff.

SysEx takes the form of

- 0xF0 (SysEx start)

- Manufacturer ID (1 or 3 bytes), helps synths decide whether it should try to read the message [2]

- a variable amount of bytes

- 0xF7 (SysEx end)

On timing, bandwidth, latency

Serial MIDI is fixed at 31250 baud/second, slow by modern standards - yet not too bad, and was easy to implement at the time.

Sending a byte (counting start and stop bits) takes 320 microseconds.

Since common messages are one, two, or three bytes long (NoteOn and NoteOff are 3-byte), most things arrive in under 1 millisecond.

Yet wanting to send, say, ten 3-byte messages all at once means the last will by physically put on the wire ~10ms later.

There are a few tricks to alleviate this on serial MIDI, including but not limited to:

- Running Status skips the first byte of a message (the status) if it's the same as the last-sent message.

- e.g. allowing shortening of a handful of NoteOns, or a bunch of CCs,

- for example, three successive noteons might normally be 0x90 0x70 0x7F 0x90 0x71 0x7F 0x90 0x72 0x7F but with running status be 0x90 0x70 0x7F 0x71 0x7F 0x72 0x7F.

- https://www.midikits.net/midi_analyser/running_status.htm

- A running-status NoteOn with velocity 0 is one byte shorter than a NoteOff

- since NoteOn with velocity 0 is understood as identical to a Noteoff by most synths anyway

This throughput limit rarely matters to human playing, because our timing is never robotically perfect, and saturating the bus with note messages would require playing so fast it wouldn't be musical.

However, it can start to matter with

- MIDI sequencers, which may wish to send a bunch of things exactly on a beat

- continuous change of pitch/modulation wheel, polyphonic aftertouch, or other fast CC

- but an even slightly clever controller prioritizes note messages more, so this should not matter

- The strongest argument (for something faster) may be Polyphonic Expression (altering parameters per played note), as that is currently often implemented assigning notes and their alterations on channels, round-robin.

- that said, many of those are now USB-MIDI where it doesn't matter

In USB-MIDI, the data that is transmitted is almost entirely the same, but due to the way it is packed and the higher speeds involved, the latencies don't really add up anymore.

(The latency of USB itself is still ~1ms, though, due to the way the OS handles it).

MIDI Sync and timing

Beat clock

For context:

Around music, sync refers to drum machines and sequencers communicating rhythm, or rather the pulse that a rhythm is based on. The earliest drum machines all used simple ad-hoc standards. Most were basic electronic pulses sent over a wire dedicated to it, but interpretation (mostly PPQN rate) varied, per brand and sometimes even model.

MIDI beat clock, a.k.a. beat clock or MIDI clock, is a more uniform standard by having it be part of MIDI, and be 24PPQN.

The MIDI message most central to beat clock is

- 0xF8 - MIDI Beat Clock, sent at a rate of 24PPQN

Note that MIDI clock is only a sync signal.

It doesn't trigger or alter any note, it's made purely to connect different devices that generate their own patterns, to keep them all in time via a master rate.

Such devices may well listen to related messages like

- 0xFA - Start

- 0xFB - Continue

- 0xFC - Stop

https://en.wikipedia.org/wiki/MIDI_beat_clock

MTC

MIDI Time Code (MTC) is something like SMPTE timecode communicated over MIDI.

MTC is useful to synchronize things that are referenced in SMPTE (so wallclock-ish) time. (SMPTE timecode itself came from the wish to sync audio and video recorded separately, instead of having to do so manually e.g. using a clapperboard)

As such, MTC communicates hour, minute, second, frame, (and the rate - one of four rates, 24, 25, 29, or 30fps),

(though most of the running messages are Quarter Frame messages, not the full thing each time).

It was made with the idea of interfacing audiovisual equipment with MIDI,

e.g. have a tape machine emit SMPTE around so that you could have it be a source of cueues, and to e.g. have synths play along.

It also lets you you show time (or bars and beats?) to people, which has potential uses while seeking in MIDI files.

You're more likely to see MTC emitted and/or consumed by DAWs.

It allows things like cueing between them (which beat clock can't do because there is no position information).

There are other potential uses,

like triggering specific things at a specific time,

playing videos synced to music[3].

http://midi.teragonaudio.com/tech/mtc.htm

Extensions

MCU and HUI

Mackie Control Universal (MCU) and Mackie HUI both refer to physical controllers from the late nineties.

These controllers were originally specific to Logic (the DAW).

Later, other DAWS and other devices started speaking this protocol, for compatibility/feature reasons.

Why?

Trying to standardize certain control of things you use in music production.

MIDI itself has a bunch of standard-enough control mappings -- but they are mostly for synth parameters, not for common DAW controls, so if you want to use MIDI to control things like mixer volumes, record/play, mute, and such, you would need to spend a while mapping CCs (assuming the DAW supported automation of these control and made it easy enough), and it'd probably be setup-specific, and might clash with already configured CCs.

MCU/HUI defined a protocol (on top of MIDI, mostly using SysEx messages) where many of these added functions were defined in a standard-ish way, so would work with little to no configuration, if plugged into a DAW that knows about it.

It seems they talk both ways, so can also show information from the DAW.(verify)

While it was kept closed for years, it's now basically known, and there is decent documentation available e.g. in logic control, MIDI Implementation section.

MMC (MIDI Machine Control)

MIDI Machine Control (MMC) is meant for control of physical media, including

Play, Fast Forward, Rewind, Stop, Pause, and Record

Note that there are other start/stop mechanisms (like beat clock's, and some more custom ones) that are likelier to be used.

Implemented via SysEx, basically the sequence:

- F0 Start byte

- 7F

- xx Device ID, or 7F for 'all'

- 06 Denotes a MMC message

- xx command

- F7 Stop byte

Where command is one of

- 01 Stop

- 02 - Play

- 03 - Deferred Play

- 04 - Fast Forward

- 05 - Rewind

- 09 - Pause

e.g. a MMC Stop may be

F0 7F 7F 06 01 F7

https://en.wikipedia.org/wiki/MIDI_Machine_Control

MSC (MIDI Show Control)

Meant for things like cues and lighting.

Implemented via SysEx

https://en.wikipedia.org/wiki/MIDI_Show_Control

MVC (MIDI Visual Control)

MIDI Visual Control Specification.pdf

Fragmented notes on mapping

MIDI files

Other stuff

Octave numbering

Percussion

On note mapping

MIDI percussion means mapping a bunch of notes to specific percussion instruments (so yes, playing MIDI drums into a regular synths mostly gets you a few regular notes).

Most follow the General MIDI percussion list, but some drum modules and DAWs specifically deviate.

https://usermanuals.finalemusic.com/SongWriter2012Win/Content/PercussionMaps.htm

On NoteOff

Only NoteOn matters to the sound being made, and in various drum modules you can get away with not sending NoteOff at all.

However, DAWs may get confused if you don't send the according NoteOff before the next NoteOn (possibly because they try to do clever things with note lengths). Which matters when they're hosting the drum VST you want to use.

So just do send the NoteOff.

The easiest implementation would be to send it just before the next NoteOn, but that has a small effect on latency, so ideally you want to have sent it before that.

Because most drum modules ignore it, you can typically send the NoteOff immediately after the NoteOn(verify). If you want visible feedback of the notes in a DAW, it may be slightly nicer to send it some time later based on a timeout after the NoteOn.

On velocity

Given that 127 is the loudest hits, then perhaps 100 could be velocity, 50 is already ghost notes, and under 20-30 you may not hear anything.

General MIDI

SPMIDI

Scaled Polyphonic MIDI was targeted mainly at ringtones on simple mobile phones.

It allows SMF files to say how they should be simplified to play on devices that make only simpler sounds.

https://www.midi.org/specifications-old/item/scalable-polyphony-midi-sp-midi

MIDI software

MIDI hardware and DIY

MIDI thru

MIDI merger

MIDI USB

Physical thing to MIDI DIY

Event processors

Fancier

More expression, and MPE

MIDI 2.0

MIDI and MIDI-like control from software

Lemur

- initially a physical device, one of the earliest touchscreen devices, long dicontinued

- now software by Liine

- scriptable

- https://liine.net/en/products/lemur/

- apple only?

Touch OSC

- scriptable in Lua

- ~20 bucks for a (device?) license

- https://hexler.net/touchosc

Liine LiveControl 2

- successor to Touch OSC

- ~25 bucks

- https://liine.net/en/products/lemur/premium/livecontrol-2/

Open Stage Control

- scriptable in Javascript (the entire thing is Electron(verify))

- FOSS

- https://openstagecontrol.ammd.net/

MIDI Designer

- no scripting?

See also: OSC

See also

See also:

- https://www.midi.org/specifications/item/the-midi-1-0-specification

- https://www.midi.org/specifications

- https://www.midi.org/specifications/item/table-1-summary-of-midi-message

- https://www.midi.org/specifications/item/table-3-control-change-messages-data-bytes-2