Sound physics and some human psychoacoustics

Frequency, wavelength

Frequency is expressed in Hertz (Hz), a unit that itself means "(times 1) per second".

It is used, among other things...

- to indicate the amount of repeated cycles a periodic signal (often a sine wave or similar) repeats per second (eg. '3000Hz tone')

- (in recording) how many samples are taken per second ('sampled at 44100Hz'), or

- the regularity of clock ticks (e.g. in CPU speed, chip synchronization)

A wavelength, also 'period', is length a full wave (particularly of a regular repeating signal), often in time.

For example, a 200Hz wave has a wavelength of 1/200 = 0.005 seconds.

When produced in the real world, wavelength also has a physical length.

Physical wavelength matters when talking about resonsance (e.g. which frequencies will resonate in a microphone, speaker design, room, mouth, etc.), and when engineering things for phase effects.

Note that physical wavelength depends on the speed of the medium. For example, the wavelength...

- of a 200Hz sound in air is (343m/s * 0.005s = ) 1.72 meters

- of a 200Hz sound in water is (~1500m/s * 0.005s = ) 7.5 meters

- of a 200Hz electromagnetic wave is (~300 km/s * 0.005s = ) 1.4 kilometers

Frequency role call

0.1 .. 10Hz Earthquakes (roughly primary and secondary waves, respectively)

20Hz Roughly the lowest frequency you hear (rather than just feel) if strong enough

<25Hz Seismic noise

20 .. 40Hz Lowest produced frequency by subwoofers lies in this range

20 .. 150Hz Cat's purr (some quote 'up to 200Hz') [1]

40Hz .. 100Hz The lowest frequency produced decently by two-or-three-inch speakers drivers

(<40Hz are physically larger)

100Hz, 200Hz Usual lower limit of most male and female voices (respectively)

500Hz .. 1kHz the bulk of the volume in human speech

2kHz the highest base frequency from a lot of instruments or very high-pitched vocal cords

(harmonics go higher, but their amplitude falls off fairly quickly)

100Hz .. 5kHz Bulk of energy from speech

5kHz Roughly the point above which vocal cord harmonics start to have

fallen off so much that they matter little to intelligibility

upper limit of AM radio transmission's content

12kHz .. 14KHz Roughly the highest produced frequency by regular few-inch speakers drivers

most instrument harmonics have fallen off to little by this range

≈16kHz People can tell whether there is an analog TV nearby by this (see note below)

≈17kHz the 'mosquito tone' used to drive off young people is somewhere around here

16kHz .. 18kHz The limit of most recording equipment,

partly through the design of (common) microphones

15kHz .. 20kHz Threshold of frequencies various people can nearly/not hear

16kHz .. 22kHz dog whistles (intentionally with some of it down in human hearing range; dogs hear up to 30kHz or so)

Yes, sounds go higher, but for a practical human-centric list it's relevant we hear little beyond 15kHz and almost nothing beyond 20kHz.

A number of animals hear up to 30 or 40kHz, whales (40Hz~80kHz) bats have exceptionally good hearing (20Hz up to maybe 200kHz, lower for some species), though their cries are far lower.

Note that in terms of just physical amplitude, most of the sound around us is in the lower frequencies - there is generally a dropoff that starts within the human-audible range

Related notes:

- Names for rough ranges of frequencies vary - fairly wildly.

- bass is lower than 250~400Hz

- some make the further distinction of sub-bass, often as below 50Hz

- ...and may use 'upper bass' to refer to the rest of the bass range

- Mid range starts at some number in the 250-400Hz range, and up to some number in the 2-6kHz range

- some additionally split into 'high/upper mids' and 'low mids'

- high frequencies refer to everything abouve the chosen mid range to the edge of hearing, so usually goes from something in the 5-6kHz range to something in the 16-20kHz range

- bass is lower than 250~400Hz

- For almost any natural (or even synthesized) source, there is a steady falloff as frequencies increase

- This seems to be around -6dB/octave around mid frequencies,

- a little faster at higher frequencies,

- and lower in low frequencies (more so in music?)

- The peak

- tends to be around 100Hz or so for music (but this is rough)

- and maybe a little higher for voice

- and can be higher if there is filtering - or the microphone doesn't do low frequencies so much.

- Recording media usually try to store frequencies in the few kHz to perhaps 16kHz range (if they have or want to spend the bandwidth), as that range contains the overtones that makes recorded signals sound crispy.

- Various music compression methods apply a lowpass filter which falls off somewhere around 15kHz-18kHz, since signals above that are generally inaudible.

- The squeak from (CRT) TVs that some people hear seems to come from the transformer driving the refresh, which since that rate is standard is typically at about 15.7kHz(verify) (525 lines x 30 frames = 15750Hz or 625 lines x 25 frames = 15625Hz), though it works out as a slightly wider band of noise around that(verify).

- Fluorescent lights may be similarly audible, but their frequency is not influenced by any one standard, varies by design, and may be much higher than 15kHz and not audible to anyone

- The sounds that people say only teenagers hear depends a little - somewhere in the 16KHz-17.5KHz range, and may be hearable or annoying depending on the frequency and the amplitude

- 15kHz at a respectable volume will be annoying to more people.

Spectrum

A spectrum is a summary of a signal in terms of the frequency content present throughout, typically shown in amount of energy in a bunch of frequency ranges.

This does not indicate change of such over time, just the average overall. See spectrograph for that.

Great as a summary, not very useful beyond that.

Spectrogram

Phase

Phase is the timing relation between a wave and some reference point, often either an arbitrary zero point, or another wave's starting point.

There are different ways of expressing this. One is an angle, in degrees (0 to 360) or radians (0 to 2*Pi), another is fractions (0 to 1). For example, a wave 90 degrees out of phase with another is (Pi/2) radians out of phase, and starts and a quarter of the wave's length (0.25) of the wave's length later.

In the context of tones of a specific frequency, you can also express this in time.

For example, 90 degrees is a quarter of the wave's full cycle, so for a 1Hz wave this is 0.25 of a second. For a 2Hz wave 90 degrees is 0.125 of a second, etc.

Values outside the range representing a single wave are valid, but only sometimes directly useful. For example, 1244 degrees is three full waves and 164 degrees. for most purposes the 'three full waves' part is not relevant, and most things programs will (and often can) only report 164. (within a few design cases, you may want to keep results in which phase isn't made to wrap like this)

Phase matters when you mix signals. Two sine waves of the same frequency will add to somewhere between double the amplitude (same phase, purely constructive interference), zero (half a wavelength out of phase, purely destructive interference), and something inbetween for other values of the phase.

Phase information is important to concurrent signals, and particularly to digital processing that recreates waveforms from component waveforms, which includes most compressed audio formats and many digital filters.

Without phase, the sound would be recognizable, but wouldn't sound very good; it would give unpredictably constructive and destructive interference and other strange effects.

Pressure, intensity, volume, loudness

What physics says

Physics talks about force, and more specifically about force per area (better known as pressure), for which the unit is a Pascal - which is 1 Newton per square meter (SI derived units makes physicists happy).

If you want some intuition to to Pascals:

- 20 microPascal is the threshold of hearing

- 200 microPascal is rustling leaves

- whispering is around 1 or 2 milliPascal

- regular sound is about 10 or 20 milliPascals

- up to 200 milliPascals is moderate singing or some traffic

- around 1 Pascals is heavy traffic or a blender or loud machinery or quite loud music

- 20 Pascal is a loud concert

- maybe 50 Pascal is pain

- 200 Pascal means hearing damage on a short term

Intensity is not force applied per area, but energy delivered per area, usually in Watt per square meter, W/m2 (sometimes in Watt per square centimeter, a factor ten thousand larger), which is both a useful and common physical measure, and one that is directly proportional to energy delivered to a listener's eardrum area.

Note that in that Pascal list, we went from micro-, to milli-, to no multiplier - in other words, the loud end of that scale is about ten million times as much force as the quiet end.

If you try to ploy that, (linear) plot, with the top being 20 (loud concert), then most of the everyday stuff will be

- in the lowest pixel even if you have a 4K screen

- in the lowest millimeter even on a large page of paper.

That's not very practical.

Separately, it has been observed that when we listen to intensities, each factor two in intensities is a barely perceptible step louder, and a factor ten in intensities is heard as a solid step louder.

So it has been suggested that loudness is perceived on an exponential scale: not a steady increase but some multiple more is perceived as a mere step louder.

This means it is sensible to put these energy numbers through a logarithm,

because the result of that is that a steady increase in intensity becomes a merely steady increase in perceived loudness --

and that number of a 'million times lower' becomes a number that expresses '6 orders lower'.

When math actually adds practicality

For context, ratios themselves will express relative levels (often power levels) perfectly fine.

Say, 2 means twice as much, 0.5 means half. Pretty simple.

Decibel is a logarithm of such a ratio of two levels.

Which means that like ratios, decibels only express a ratio, not an absolute quantity,

so by itself it just lets you say "is this much larger or smaller than another thing" - and nothing more.

Positive dB is louder.

Negative dB is quieter.

...than what, though?

Just a ratio has no direct real-world meaning.

It gets real-world meaning by making one of the two levels at a real-world reference.

Which is useful, so we usually do that.

Decibel with a real-world reference

For example, around sound you most commonly have dB SPL (Sound Pressure Level). (This is so common that it's often implied. When you hear decibel to mean sound level, it's almost certainly dB SPL)

The reference for dB SPL is 20µPa (RMS).

...which is the approximate threshold of human hearing in air.

- this makes almost all useful figures positive, within 0..120dB

- negative dB SPL exists - it's not negative sound, it's just quieter than that level we decided is useful we it's sound we're listening to.

- Which often means you need an anechoic chamber to not be drowned out by the environment

- which needs high quality audio equipment to not be drowned out by equipment's noise

- ...so in a practical sense, few of us ever deal with

- ...or even

busier parts of a city tends to be 50dB ambient, even quieter parts of cities rarely go below 40

"So 0dB SPL is a quiet room?"

Very quiet, more quiet than you need.

...though also not as quiet as is technically possible. Note that there is only a human significance to the 0dB point in dB SPL, not one related to physics. The 0dB SPL point is useful to have, but not an absolute.

You can get negative dB SPL - which isn't negative sound, it just means the background noise is quieter than our ears preceive. Which you can get only in an anechoic chamber.

In recording, you don't need to do better while recording until the environment noise is even quieter,

so more practically it is

- rare to have the environment be below 15-20dB (decent studio)

- not common to have the environment be below 30-40dB (regular rooms)

- not very useful to have a recording room that is quieter than your breating

In music, you are part of the environment noise, so there is a point at which gearheadery becomes complete faff.

But, practically, why?

"We already had that ratio. As a number that is a factor - seems straightforward. What is the benefit of adding a logarithm?"

There is also the idea that many of our senses (sound, light, touch, more) are perceived in a way where increasingly larger steps feel like a merely steady increase. ...it turns out quite approximately - see e.g. Wikipedia: Stevens' power law -- and in particular the criticism. Even where the numbers aren't an exact model, the "very large difference" part is still workable.

E.g. if ten times an initial sound level may sound only twice as loud, and a hundred times the initial level seems maybe three times as loud.

Logarithms capture that concept fairly well - ten times the magnitude is is +10dB, a hundred times the magnitude is +20dB; a tenth of the magnitude is -10dB, etc.

Dealing with large ranges

One reason is that when you work with power levels, you often work with a large range of them.

Say, the loudest sounds we can deal with and the quietest we can hear are a factor 1,000,000,000,000 different.

More everyday differences more on the order of 1,000 to 1,000,000, but these are still large numbers.

In sound as well as in some radio-frequency engineering, you care that the noise is much smaller than your signal, preferably on the order of a millionth or more.

Decibels in general let us express express such very large and very small ratios, with numbers that look much more reasonable numbers.

Yes, we could have used scientific notation, in fact the whole idea is fairly similar,

but arguably expressing all factors and levels in decibel-like things ends up being less messy.

Graphing

Logarithms also help express such things in a graph.

Plotting factors much smaller than 1/100 runs you into the limit of pixels on a screen, or grains in a piece of paper.

So plotting noise floors would easily require a piece of paper larger than your house - or even block if it's decent.

What ears and the brain have to add

Inner and outer ear: Cochlea, basilar membrane, meatus, and more

Implications from physiology

On high frequencies

The upper frequency limit is mostly explained by a few things: the fact that the basilar membrane stops, that the hairs are shorter and harder to excite, and that our inner ear acts like a lowpass filter (direct delivery to the skull can let us hear frequencies we would normally consider ultrasound, though the difference isn't that large).

There is a gradual falloff, and around 19 or 20kHz is large enough that we can disregard higher frequencies for most purposes.

It seems most children can hear a little beyond 20kHz, and the figure drops over your lifetime (hearing damage or just physiology?(verify)), so that by your thirties you hear little beyond 18kHz, and eventually hear little beyond 15kHz.

It seems women hear high frequencies slightly better on average(verify), but there is also noticeable variation per person, and a lot of variation in hearing damage by loud music (headphones as well as clubs).

Note that you might still notice loud ~18kHz tones, but this is relatively rare to loudness in, say, the few-kilohertz range.

If you can hear high frequencies at all, it is often no more interesting than being able to tell a CRT-style TV is on or off.

Doing your own informal tests are easily inaccurate, because there are multiple things that easily go wrong:

- headphones vary in their response in such high frequencies

- when the audio path distorts, it may easily introduce a lower tone via aliasing

- which may lead you to believe you can hear 22kHz when you're actually listening to something like 15kHz

Another question to be raised is that whether there is much sound we would consider useful above 15kHz.

People would understand each other even if there was a hard cutoff at half that and phones cut out at less that that, not too far above 4kHz, which still captures the bulk of human speech. (Note that this is not the cause of tinniness on the phone; this comes from a cut of lower frequencies)(verify)

Audiophiles may argue that the overtones (harmonics) that make music richer should be given a lot of leeway, which is true but depends on the definition of 'a lot'. We include up to ~20kHz in most signals just to be safe, but because of both physical amplitude of overtones and because of human hearing, the overtones that are noticeable lie only in the first few multiples. Producing a double high C (~2kHz) is impressive for instrument and vocal chords alike, and even for that, overtones have seriously petered off before 15kHz.

Few microphones go above 16kHz with any accuracy, and 15kHz is a more common rating to come out of realistic tests. Microphones tend to filter out higher frequencies by design, partly because higher frequencies may resonate with small parts of the microphone, and partly so that you don't have to do this filtering in your recording setup - if it didn't, you might easily get erroneous resonance recorded - various media are capable of recording a good deal above 15kHz, after all.

Strictly speaking you can only tell microphone response from properly measured response curves (and checking for recording accuracy) since summary figures like 20Hz-20kHz say little about the amplitude at each frequency -- and if the figures are that round-figured they're a bit suspect in any case. Microphones tend to have a lower frequency limit too, which is one large reason microphone choice matters in recording.

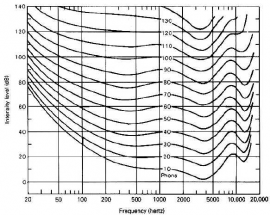

Perceptual loudness of different frequencies

Physical reality can be described in terms of pressure or intensity, but human perception of the same sounds sounds cannot.

The most important detail is probably the fact that we hear the same physical amplitudes of different frequencies as different intensities. For an extreme example, a pure 40Hz tone needs to be about 45dB louder than a pure 4000Hz tone to be perceived as just about as loud (note that 45dB is roughly a multiple of 30000 of energy used).

This difference is caused by the way our ears work. This has been measured several times,

- notably by Fletcher and Munson (1933),

- a little more accurately by Robertson and Dadson (1956),

- and more accurately since that (see e.g. ISO 226:2003 for details).

Most of these tests used near-perfect listening conditions, middle-aged people, and perhaps most importantly, pure tones. This last detail limits their value of direct application in the face of complex signals, noise, temporal psycoacoustics (pulses, listener fatigue), music, and such.

The test results are often viewed as a graph of equal loudness contours, which indicate the perceived difference when a (simple) sound at a particular db(SPL) level changes frequency.

A different way to see the contours is the amount of amplitude change the ear applies for a tone at a frequency and amplitude.

There are of course other effects on how you hear each frequency, some from the environment and physics (interference, absorption), some psychoacoustic (frequency masking, temporal masking, listener fatigue, reaction to pulses), some related to quality of your reproduction hardware (headphones often offer better detail than speakers, cheap sound cards may actually alias) and others.

There are other effects that you could call psychoacoustic. For example, our judge of how loud a sound system is depends not only on how loud it is but also on how much it is distorting.

If you digitize the equal loudness curves, take medium sound levels, flip it to gear it towards subtracting (probably with a max of 0dB, so you don't accidentally cause this step to overdrive something else), you'll get something like like the graph on the right. With this data it is fairly simple to adjust post-FFT data for loudness -- at least, for better than not doing this.

See also:

- Fletcher, H., and Munson, W.A. Loudness, its Definition, Measurement, and Calculation. Journal of the Acoustical Society of America, 5, (2), 82-108, 1933

- Robinson, D. W., and Dadson, R.S. A Redetermination of the Equal-loudness Relations for Pure Tones. British Journal of the Applied Physics, 7, 166-181, 1956

- Wikipedia: Equal loudness contour

- http://www.phys.unsw.edu.au/jw/dB.html (Phons, Sones, dbA, dbC)

- http://www2.sfu.ca/sonic-studio/handbook/Phon.html (Phon)

- Wikipedia: A-weighing

- Wikipedia: ITU-R 468 noise weighting

Phons and sones were proposed perceptual loudness units / experiments, and neither are in particularly regular use other than being referenced by some definitions.

Phons: At 1kHz, 1 phon is defined as 1 dB SPL. For other frequencies it is adjusted following the equal loudness curves.

Sones: A phon-based exponential scale with base two. The definition says that at 1kHz, 1 sone is 40 phons (probably because that makes 1 sone a practical quiet sound rather than a theoretical hearing limit). The idea behind the base two is that a perceptual doubling of intensity (~10dB) means a doubling of the sones:

sones phons / dBSPL@1kHZ 0.5 30 (verify) 1 40 2 50 4 60 8 70 16 80 32 90 64 100 128 110 256 120 512 130 1024 140

(For frequencies other than 1kHz this must be adjusted according to equal loudness curves).

See also:

Largely-associative perceptions

Consider:

- a whisper right in your ear might be louder than the traffic outside,

- but we seem to correct for knowing that constand wide-band background noise tends to mean lots of noise.

- If a sound distorts it feels louder,

- even if that was a little transistor or plugin doing that for you,

- and the amplitude, peak, or even RMS is the same.

- sidechained ducking - the thing in (mostly electronic) music where all instrument amplitude are lowered around a bass drum hit.

- It's objectively quieter but the kick is better defined, and we associate it with loud techno.

Flavours of decibel

dB SPL

Around sound you often use dB SPL (Sound Pressure Level) (so common that it's often implied even if people forget the 'SPL'), referened at dB SPL is 20µPa (RMS).

dB inside your computer

Not referenced by anything. (There are too many potential volume knobs that would destroy a reference even if you tried having one)

Often a dbFS thing. The maximum is 0dB - by definition, and it means nothing other than that definition.

dB re 1V/Pa

See also Electronics_project_notes/Audio_notes_-_microphones#Sensitivity

Filtered measures of loudness

You can filter audio before estimating the energy, and would do so for specific purposes, e.g. estimating how bad noise pollution is by focusing on the frequencies you hear more.

Note that most are not designed for complex signals like music (or necessarily even noise). The weighing is still broadly useful, but they do not do enough to be accurate perceptive volume measuring for that. Even the ones that are designed for more complex signals (e.g. ITU-R 468, K-weighing) aren't perfect at that.

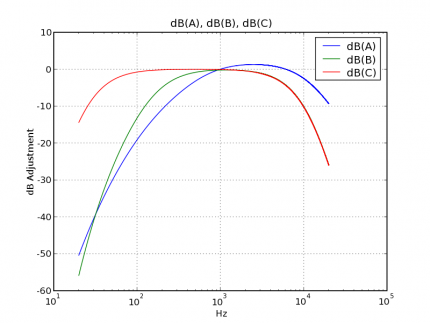

dBA

dB(A) is probably best known among these filters

- commonly used to mechanically measure sound levels

- in roughly human terms

- at relatively quiet levels

- ...making it appropriate to measure lower-level environment noise.

- It mainly emphasizes the 3kHz..6kHz range.

- Apparently it was meant to broadly follow the 40-phon Fletcher-Munson curve, which also means it is most accurate / most appropriate to use at relatively quiet levels (40 phons).

- on dbA and bass: It weighs the first few dozen Hz down a lot (30 to70dB),

- which is also a good way to have a device spec sheet bend the interpretation a little to pretend 50 / 60Hz hum is less there than we would hear.

- Sound level meters on mixing panels and similar might be (roughly) A-weighed -- which is useful when focusing on vocals but quite a poor indication of bass.

- generally assume level meters are not great at indicating bass (though the way they are wrong varies a bunch) until you know what you have

dBC

dB(C)

- seems meant to

- approximate the ear

- at fairly loud sound levels

- and leaves in more low frequencies, but not enough to evaluate the effect of low bass.

- It seems to often be used in traffic loudness measurements.

Other filtered decibels

- dB(Z) is flat for most of the spectrum (z refers to zero)

- has defined cutoff points

- ...more defined than 'flat' ratings left otherwise left up to manufacturers.(verify),

- ...useful at pointing out that there is a lower and upper limit to production and perception

- while less directly useful for loudness perception unless it is well defined where those falloffs are - but apparently it should be a passband between 10Hz and 20kHz(verify)

- yet arguably the most useful to evaluate potential hearing damage

- not to be confused with dBZ as used in (weather) radar, where it measures relative reflectivity(verify) to e.g. measure amount of rain

- ISO 226 has a more complex frequency response than dBA/B/C, with pre-2003 versions based on the Robertson-Dadson results, and the 2003 version being based on revisions based on more recent equal loudness tests.

- ITU-R 468 (note: ITU-R used to be CCIR), is a better approximation for noise and complex signals (dBA and its family were designed for pure tones), and also models our reduced sensitivity to short bursts and clicks to some degree. R468 has seen a lot of use in some specific fields.

- K-weighing

- broadly similar to the A-Weighting curve, but is more sensitive above 2kHz

- (used in LUFS, defined in ITU‑R BS.1770?)

- Old and/or specific-purpose ones include

- dB(B) lies somewhere between A and C, both in terms of frequency response and intended loudness levels. You could say it roughly models the ear at medium sound levels - which arguably makes it more useful than either A or C.

Yet it seems to be rarely used, perhaps because there are better models there are better models that are not much more complex.

- dB(D) was intended for loud aircraft noise. It has a peak around 6kHz that models how people sense random noise differently from tones, particularly around there (verify).

- dB(G) focuses primarily around sub-bass (20Hz) and is useful when measuring large slow movements, like wind turbines.

Some weighings have relatively simple circuits, e.g. https://web.archive.org/web/20210507071115/https://sound-au.com/project17.htm

See also:

- http://en.wikipedia.org/wiki/Weighing filter

- http://en.wikipedia.org/wiki/A-weighting

- http://en.wikipedia.org/wiki/A-weighting

- http://en.wikipedia.org/wiki/ITU-R_468_noise_weighting

- ISO 226

- http://en.wikipedia.org/wiki/ITU-R_BS.468

And perhaps:

- https://midimagic.sgc-hosting.com/spldose.htm

- http://www.phys.unsw.edu.au/jw/dB.html

- http://www.cross-spectrum.com/audio/weighting.html

- http://www.kolumbus.fi/iain.churches/ThermionicThoughts/TubeAmpNoise.html

Getting a little more practical than technical

Getting a feel for decibel SPL numbers

- dB SPL in air: Preference = 2 * 10−5Pascal (rms), = 20µPa (rms). (Pascal = N/m2)

- dB SPL in water: 1µPa (10−6) seems common

In air:

- 0dB SPL is the threshold of hearing (of a 1-2kHz tone)

- 10dB SPL might be quiet breathing, or a falling leaf - measurable but still barely perceptible

- 20dB SPL is a very quiet basement, or perhaps the gentlest of rustling leaves in a very quiet neighborhood (TODO: better examples)

- 30dB SPL is very quiet whispering, a quiet room fan at a few meters; a recording studio or library or otherwise pretty quiet room

- 40dB SPL is

- 50-60dB SPL is a regular conversation or radio at a few meters, a bathroom fan, background music, a microwave, a coffee machine

- 60-80dB SPL is singing, the sound inside a not-very-isolated car, traffic at 1-10m, a loud computer server; 70dB SPL is enough to e.g. disturb a phone call

- 80-90dB SPL is busy traffic, a subway, a blender, a hairdrier, an air compressor, a coffee grinder, or pretty loud music - and starts being a factor in long-term hearing loss

- 100dB SPL is very loud music music, a circular saw, loud factory machinery and such

- 110dB SPL (or less) is what most most home stereo sets will manage at most

- 120dB SPL is a very loud concert (and 1Watt/m2)

- 130dB SPL is approximately the pain threshold

- 100-140dB SPL is a jet plane at 100m to 30m

- 140dB SPL can do immediate and permanent damage to human ears under many circumstances (130dB is already pushing your luck, as are lower figures if exposed to them a lot), apparently standing right next to an air raid siren will be about this, as will a popped balloon from moderately closeby

- Around 190dB SPL is the theoretical limit of our atmosphere (apparently a Saturn rocket puts out this much). You'd need more pressure to go higher(verify)

Other practicalities

Power quantities versus root-power quantities

See also:

- https://en.wikipedia.org/wiki/Decibel#Power_quantities

- https://en.wikipedia.org/wiki/Decibel#Field_quantities_and_root-power_quantities

- https://en.wikipedia.org/wiki/Field,_power,_and_root-power_quantities

Decibels and distance

While sound sent a pipe will carry a long way because it's mostly diminished by losses (lost to the pipe, and self-interference; sort of like a waveguide), most sound (and EM) in the real world are less focused, most sound much less focused.

When emitted more or less equally in all directions, it diminishes quickly.

Somewhat-intuitively, the falloff comes from the having to fill an ever-increasing volume, which means decibel falls over distance in a very predictable way, so the more distance you have, the more energy you need to have someone standing there hear the same levels.

How quickly does it fall off, how much more energy for the same level?

Well, read up on the inverse square law.

- (Roughly the same applies to cases of sound, light and radio waves and other EM, and more).

This comes with some footnotes: Near field details also apply, which changes that curve a little when you're close to the source. It's frequency-dependent, even, which is why the proximity effect and others are a thing. We typically ignore both those details, partly because it's relatively subtle, and partly because it's too much work until you care about the details.

RMS

Consider a sine wave.

Its amplitude refers to its peaks, but those peaks are not a direct measure of the (average) energy that needs to be used to put out that signal.

To get such an effective power or effective pressure figure for a signal, you usually calculate its root mean square (RMS).

For a sine wave, the RMS figure lies around 0.707 (sqrt(2)/2) of the peak of the waves (that 0.7ish is also used for some quick and dirty estimations in some situations)

Note that:

- dB SPL figures are generally understood to be RMS measurements. (verify)

- the figure will be less accurate for more complex signals

- this is separate from weighting;

- if you are showing figures for human consumption, you may want RMS after filtering for hearing

If what we sample is much shorter than a wavelength, that power figure becomes less accurate

Ideally, you should use a window size of at least two wavelengths of lowest frequency you wish to accurately measure, which for sound generally means at least a few dozen milliseconds.

It is not always well defined how this reponds to transients (sudden spikes) - nor how we would care to see those,

so this could be a feature or a bug, depending on what you're doing.

Having multiple channels (stereo or more) also changes things.

You can see them as separately adding energy to the room, but just adding them isn't entirely precise,

because phase-based destructive interference comes into play (sometimes intentional, sometimes not),

and it won't happen the same way in the signal as in the room.

Which can be overstated -- in the case of music you can mostly just get away with this, as signals tend to be roughly identical and constructive, but instead of mixing them, you may wish to calculate RMS per channel and combine the figures.

It is always hard to estimate the delivered power, and its perception more so.

Some real-world loudness metrics

VU meter, Peak Programme Meter

A VU meter ('Volume Unit') was historically implemented by a galvanometer used as ammeter, being a simple and almost passive component that could show signal strength.

Studio VU meters are standardized in that 0VU is +4dBu - but with footnotes, flaws, and biases (intentional and not).

- The needle's mass (and the spring it acts against) makes it non-instant, effectively introducing a lowpass and a slow falloff effect.

- When you want to know "roughly how loud were things recently, on average", both of these things are arguably a feature

- they had at most basic filtering, which means they forget that we don't hear frequencies equally.

- for example, significant bass would make many VU meters spike higher than human ears would hear it.

- There are a few standards around VU meters, but you wouldn't necessarily know whether anything adhered to it

- Consumer market VU meters rarely did.

- VU meters also don't tell you about peaks and transients, though, and that is more important for broadcast.

...so Peak Programme Meter (PPM) is essentially a more recent, better-quantified, and more specific-purpose variation on VU meters.

They are defined to do filtering, if physical they do active driving of the needle, fast attack and slower release (presumably in part because of the needle, and in part because that's still useful).

Even when they do much the same, but they are more of a known quantity.

While modern PPMs are the things to use for more accurate peak monitoring,

to conform to broadcast standards, better notice clipping, and such, they are still not necessarily better at showing rough perceived loudness.

A PPM will often indicate an

- a typical permitted max (which you can cross, but don't want to cross frequently / intentionally)

- e.g. at -10dB of its max. (actually typically -9dB, arbitrary but with reasons)

- alignment level (where you typically want the signal to hang around)

- e.g. at -20dB of its max

- called alignment level because in broadcast, devices are calibrated to this (using a standard sine signal)

- which also makes things more human-sensible if you have various devices in your chain

Peak and hold - PPM (and some other meters) may indicate both a fairly responsive level updated constantly,

and one that holds a longer-term maximum.

Depending on implementation it stays for a given time and then disappears , or slowly decays over time.

This is mostly useful to check on the loudness of sudden noises, which after all was the point of PPM.

Further notes

- Quasi-PPM will show peaks less intensely when they are shorter than a few ms, True PPM will show peaks however brief,

- This is effectively an integration time thing.

- Digital PPM may be Sample PPM (SPPM ), which uses max(samples)) because that's simple to calculate

- Note that this can still be ~1dB below the actual waveform peaks (see true peaks).

- VU amd PPM meters rarely show more than 60dB of range, and broadcast may focus on 30dB (presumably due to more compression).

- PPMs are tied to dBu levels - but there are a number of different standards. ...because of course there are.

- As such, VU and PPM only roughly relate to each other (until you know everything is adhering to the same standard).

digital VU and digital PPM meters

dBFS

LUFS

Sample peak versus true peak

Sound level meter notes

Sound level meters, a.k.a. sound pressure level meters, decibel meters measures the absolute level of sound,

usually referenced to the human hearing threshold.

Typically:

- 35ish to 4kHz or 8kHz or such

- ...the range most relevant to most workplace/industrial hearing damage(verify)

- broadly, for e.g. voice dBA can be more suitable, for noise pollution dBC can be more suitable(verify)

- speed: average over ~125ms or ~1s (fast and slow settings)

- and sometimes a ~35ms 'impulse', but it doesn't have a lot of uses (other than specifically noticing or specifically ignoring transients?)

- range: ~30dB to ~130dB SPL, usually

- measuring levels lower than 30dB SPL is a specialist thing indeed

- dynamic range is typically lower (~50dB, possibly less) (so they do some adaptive / configurable gain stuff?)

- aim for precision is usually < 2dB, and if certified, <1.5 and 1.0

If your goal is providing a safe work environment, particularly if that safety is to some regulation, which implicitly requires certain precision and certain features.

These are more expensive, though probably most of the price lies in calibration and certification being part handwork (which frequently also imply you need to regularly send it in for recalibration), (and presumably also the fact that you will pay for it if you need it).

Say, there is IEC 61672-1 (cf. EN 61672, DIN 15905-5) that defines class 1 and class 2 meters. Roughly speaking,

- class 1 to 1dB, considers a wider frequency range and considers the effect of environment temperature on the measurement electronics(verify).

- It's more expensive, and typically comes with calibration certification

- class 2 is precise to approximately 1.5dB - an can still a lot better than low-cost meters that don't mention certification at all.

Without certification, things can get a lot cheaper, even if their performance is actually be close to class 2.

Just without any guarantee that it is.

Where the certified ones start around 1000 or 3000 bucks, you can get a no-gurantees one for 20, a good-enough one for 60 or so, a fancier one for 300. (orders of magnitude).

Features you may see named

- Integrating – averaging over time. Most do, cheap ones may only give you instantaneous values

- LAeq – A-weighted

- LCeq – C-weighed (more higher and lower frequencies)

- LCPeak – C-weighted peak - good if impact noise and such noise is more important than the average

- octave bands - a coarse way of figuring out dominant frequencies(verify) which can be useful when choosing the most fitting hearing protection

- LEP,d - daily personal exposure level, roughly sound level times time - and calculated from some samplers rather than measured fully

- LEX,8h is the EU name, LEP,d is the UK name

- separated mic

- data logging

- bluetooth/usb logging/readout

On loudness targets

On ads

Unsorted

https://blog.frame.io/2017/08/09/audio-spec-sheet/

https://www.soundonsound.com/sound-advice/q-whats-difference-between-ppm-and-vu-meters

Most volume bars are sort of wrong, but in a sort of useful way

See also

Intensity, perceived intensity:

- http://www.physicsclassroom.com/Class/sound/u11l2b.html

- http://www2.sfu.ca/sonic-studio/handbook/Decibel.html

- http://www.resonancepub.com/unwateracou.htm

- What is a decibel?, http://www.phys.unsw.edu.au/~jw/dB.html#log

- Sound Power, Intensity and Pressure, http://web.archive.org/web/20041207113839/http://www.engineeringtoolbox.com/27_57.html

- Fletcher, H., and Munson, W.A. Loudness, its Definition, Measurement, and Calculation. Journal of the Acoustical Society of America, 5, (2), 82-108, 1933

- Phon, Aug 2004, http://www2.sfu.ca/sonic-studio/handbook/Phon.html

- Equal loudness tester, Aug 2004, http://www.phys.unsw.edu.au/~jw/hearing.html

- sound power , Intensity and Pressure, http://www.engineeringtoolbox.com/27_57.html

- The Physics Classroom, http://www.physicsclassroom.com/Class/sound/u11l2b.html

- Replay Gain - RMS Energy, http://replaygain.hydrogenaudio.org/rms_energy.html

- ANSI S1.1-1994

More results of physiology

Psycho-acoustics is a study of various sound response and interpretation effects that happen in the source-ear-brain-perception path, particularly the ear and brain.

There are various complex topics in (human) hearing. If you mostly skip this section, the concepts you should probably know about the varying sensitivity to frequencies, know about masking and such, and know that practical psycho-acoustic models (used e.g. for things like sound compression) are mostly a fuzzy combination of various effects.

Frequency perception

In music, tones are usually seen in a way that originates in part from scientific pitch notation, in which an octave is a doubling of frequency. This already illustrates the non-linear nature of human hearing, but is in itself not actually an accurate model of human pitch perception.

Accurate comparison of frequencies and frequency bands can be an involved subject.

Perceived equal frequency intervals/distances are not easily caught in a more complex function. If you've ever heard a frequency generator slowly and linearly increase the frequency, you'll know that it sounds to us like fast changes at the start and past ~6KHz it's all a slightly changing high beep.

In addition, or perhaps in extension, the accuracy with which we can can judge similar tones as not-the-same changes with frequency, and is also not trivial to model.

Frequency warping is often applied to attempt to linearize perceived pitch, something that can help various perceptual analyses and visualizations.

The critical bandwidth increases and the pitch resolution we hear decreases.

The non-linear nature of frequency hearing, the existence and the approximate size of critical bandwidths is useful information when we want to model our hearing.

It is also useful information to things like lossy audio compression, since it tells us that spending equal space on perceived tones means we should expend coding space non-linearly with frequency.

For background: Mathematical frequency intervals

In the mathematical scientific pitch notation, an octave refers to a doubling of the frequency, cents are a (log-based) ratio defined so that there are 1200 steps in an octave, of a multiple 21/1200 each.

Note that the human Just Noticeable Difference tones is around 5 cents, although there are reasons that tuning should ideally be more accurate than that, particularly for instruments with overtones for there to be more audible dissonance in (verify).

Notes can be referenced in a note-octave, a combination of semitone letter and the octave it is in.

This scale is typically anchored at A4, typically settling that as 440Hz - concert pitch, but there are variations.

The limit of 88 keys on most pianos, good for seven and a half octaves, comes from a "...that's enough" (88-key is A0 to C8 - below A0 it's more of a rumble, above C8 it's shrill, though there are some slight extensions). That makes its middle C be C4.

See also:

- http://en.wikipedia.org/wiki/Octave

- http://en.wikipedia.org/wiki/Cent_(music)

- http://en.wikipedia.org/wiki/Semitone

- A lot of music theory

Bandwidths, frequency warping, and more

Note that studies and applications in this area usually address multiple things at once, usually one or more of:

- estimation of the width of the critical band of a band / at a frequency

- frequency warping (Hz to a perceptually linear scale). (Note that a fitting critical band data can often be a decent approximate warper)

- working towards a filterbank design that is an accurate model in specific or various ways (there are a number of psychoacoustic effects that you could wish to model, or ignore to keep things simple)

Also useful to note:

- since some terms refer to a general concept, it may refer to different formulae, and to different publications that pulls in more or less related details than others.

- There are a good amount of convenient approximations around, which tend to confuse various summaries (...such as this one. I'll warn you against assuming this is all correct.)

- while bands are sometimes reported as given widths around given centers, particularly for approximate filterbank designs, bands do really not have fixed positions. It is more accurate to consider a function that reports a width for arbitrary frequencies.

- There is often some rule-of-thumb knowledge, and various models and formulae that are more accurate than either of those - but many formulae are still noticeably specific and/or inaccurate, often mostly for lowish and for high frequencies.

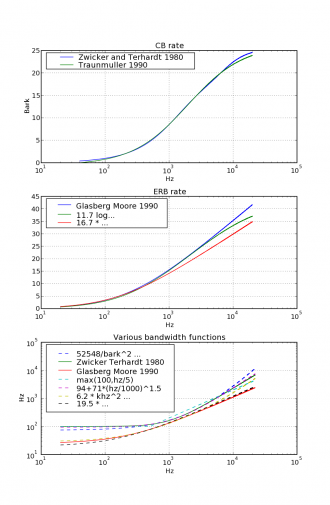

It's useful to clearly know the difference between critical band rate functions (mostly a frequency warper, a function from Hz to fairly synthetic units) and critical bandwidth functions (estimation of the bandwidth of hearing at given frequency, a Hz→Hz function). They are easily confused because they have similar names, similarly shaped graphs, and the fact that they approximate rather related concepts.

A quite understandable source of confusion is that in many mentions of critical band rate, it is noted that the interval between whole Bark units corresponds to critical bandwidths. This is approximately true, but approximately at best. It is often a fairly rough attempt to simplify away the need for a separate critical bandwidth function. One of the largest differences between these two groups seems to be the treatment under ~500Hz, and details like that ERB lends itself to filterbank design more easily.

Another source of confusion is naming: Earlier models often have a name involving 'critical band', later and different models often mention ERB (equivalent rectangular bandwidth), and it seems that references can quite fuzzily refer to those two approximate sets, the whole, or sometimes the wrong ones, making these names rather finicky to use.

If you like to categorize things,

you could say that you have CB rate functions (bark units),

ERB rate function (ERB units),

and from both those areas there are bandwidth functions.

Critical bands

Critical band rate:

- Primarily a frequency warper

- Units: input in Hz, output (usually) in Bark

- often given the letter z

- approximately linear to input frequency up to ~200Hz (~0 to 2 bark)

- approximately linear to log of input frequency for ~500Hz..10kHz (~5 to 22 bark)

Critical bandwidth:

- a function to approximate the width of a critical band at a given frequency (Hz->Hz)

- Units: input in Hz, output in Hz

- useful for model design, usually along with a frequency warper

- approximately proportional to input frequency up ~500Hz (sized ~100Hz per band)

- approximately proportional to log of input frequency over ~1kHz (sized ~20% of center frequency)

ERB

Equivalent Rectangular Bandwidth (ERB) and ERB-rate formulae (both introduced some time after most mentioned critical-band and bandwidth scales) approximate the relationship between the frequency and ear's according critical bandwidth.

More specifically, they do so using a modeled rectangular passband filter (with the same pass-band center as the auditory filter it models, and has similar response to white noise).

For low frequencies (below ~500Hz), ERB bandwidth functions will estimate bandwidth noticeably smaller than critical bandwidth does.

There are a number of different investigations, measurements, and approximations to calculate an ERB. The formulae most commonly used seem to be from (Glasberg & Moore 1990)(verify).

Bark scale

The term Bark scale (somewhat fuzzily) refers to most critical band rate functions.

The Bark unit was named in reference to Heinrich Barkhausen (who introduced the phon).

Using bark as a unit was proposed in (Zwicker 1961), which was also perhaps the earliest summarizing publication on critical band rate (and makes a bark-unit-is-approximately-a-bandwidth note), and which reports a number of centers, edges.

Bark is related to, but somewhat less popular than the Mel scale.

The value of the Bark scale in tables often goes from 1 to 24, though in practice it can be a function meant to be usable from 0 to 25. (the 24th band covers a band up to 31kHz. The 25th is easily extrapolated, and useful to e.g. deal with 44kHz/48kHz recordings).

Mel scale

There is also the Mel scale, which like Bark was based on empirical data, but has slightly different aims

- Frequency warping scale that aims for linear perceptual pitch units (verify)

- Defined as f(hz) = 1127.0148 * loge(1+hz/700)

- Which is the same as 2595 * log10(1+hz/700)

- and some other definitions (approximation or exactly?)

- The inverse (from mel to Hz) is f(m) = 700 * (em/1127.0148 - 1)

Various approximating functions

CB rate

Bark according to Zwicker and Terhardt 1980:

13*arctan(hz*0.00076) + 3.5*arctan((hz/7500)2)

- error varies, up to ~0.2 Bark

Bark according to Traunmuller 1990:

f(hz) = (26.81*hz)/(1960.0+hz) - 0.53

- seems more accurate than the previous, particularly for the 200-500Hz range

- seems to be the more usual function in use

ERB rate

11.17 * loge( (hz+312)/(hz+14675) ) + 43.0

(mentioned at least in Moore and Glasberg 1983)

Bandwidth

Very simple rule-o-thumb approximation ('100Hz below 500Hz, 20% of the center above that'):

max(100,hz/5)

(Zwicker Terhardt 1980, or earlier?)

25 + 75*( 1 + 1.4*(hz/1000.)2 )0.69

...with bark (and not Hz) as input:

52548 / (z2 - 52.56z + 690.39)

6.23*khz2 + 93.39*khz + 28.52

See also (bandwidths)

- Traunmüller (1990) "Analytical expressions for the tonotopic sensory scale", J. Acoust. Soc. Am. 88: 97-100

- Glasberg, Moore (1990), "Derivation of auditory filter shapes from notched-noise data", Hear. Res. 47, 103-138

- Moore, Glasberg (1987) "Formulae describing frequency selectivity as a function of frequency and level, and their use in calculating excitation patterns"

- Moore, Glasberg (1983) "Suggested formulae for calculating auditory-filter bandwidths and excitation patterns", J. Acoust. Soc. Am. 74: 750-753

- Zwicker, Terhardt (1980), "Analytical expressions for critical-band rate and critical bandwidth as a function of frequency", J. Acoust. Soc. Am., Volume 68, Issue 5, pp.1523-1525

- Zwicker (1961) "Subdivision of the audible frequency range into critical bands (Frequenzgruppen)", J. Acoust. Soc. Am. 33: 248

Unsorted:

- http://www.ling.su.se/staff/hartmut/bark.htm

- http://www.sfu.ca/sonic-studio/handbook/Critical_Band.html

- http://en.wikipedia.org/wiki/Equivalent_rectangular_bandwidth

- http://www.ling.su.se/staff/hartmut/bark.htm

Filterbanks

The idea here is to get a basic imitation of the ear's response throughout the frequency scale

While the following implementation is still a relatively basic model of the human cochlea, it reveals various human biases to hearing sound, and as such are quite convenient for a number of perceptual tasks.

The common implementation is a set of passband filters, often with overlapping reponse, to model response to different frequencies.

On eh (~34mm-long) basilar membrane, a sound will tend to excite roughly 1.5mm of it at a time,

which leads to the typical choice of using 20 to 24 of these filters.

That number of centers, and the according bandwidths (which are not exactly based on the 1.5mm) vary between different models' assumptions, and on the frequency linearization/warping used and the upper limit on frequency that you want the model to include. (For a sense of size: the more important bandwidths are usually on the order of 100 to 1000Hz wide)

That frequency resolution may seem low, but is quite decent considering the size of the cochlea.

The Just Noticeable Differences seem to be sub-Hz at a few hundred Hz, up to ten Hz at a few kHz, up to perhaps ~30Hz at ~5-8kHz.

Reports vary considerably, probably because pure sines are much easier than sounds with timbre, vibrato, etc. so it can easily double over these values. (verify)

Regardless, note that this is much better than the ~1.5mm excitation suggest; there is obviously more at work than ~24 coefficients, and for us, much of this happens in post-processing in the brain.

Masking effects

Listener fatigue

Hearing damage

Unsorted psychoacoustic effects

Localization

Selective attention